1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

|

#imports

import matplotlib.pyplot as plt

import numpy as np

# TensorFlow and tf.keras

import tensorflow as tf

from keras.datasets import fashion_mnist

from keras.utils import np_utils

from keras.models import Sequential

from keras.layers import Dense

from keras import optimizers

from keras import initializers

print(tf.__version__)

|

1.13.1

Using TensorFlow backend.

1

2

3

4

5

6

7

|

#setup

(train_images, train_labels), (test_images, test_labels) = \

fashion_mnist.load_data()

class_names = ['T-shirt/top', 'Trouser', 'Pullover', 'Dress',

'Coat', 'Sandal', 'Shirt', 'Sneaker', 'Bag', 'Ankle boot']

|

1

2

3

4

5

6

7

|

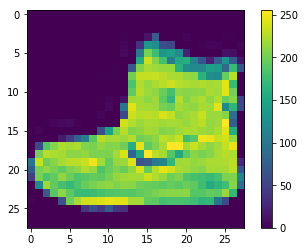

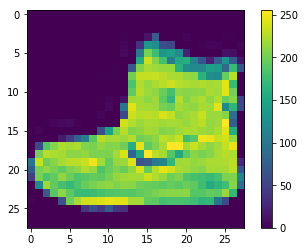

# dataviz for fashion_mnist data

plt.figure()

plt.imshow(train_images[0])

plt.colorbar()

plt.grid(False)

plt.show()

|

1

2

3

4

|

#normalize training and test data

train_images = train_images / 255.0

test_images = test_images / 255.0

|

1

2

3

4

5

6

7

8

9

10

11

12

13

|

# Draw dataset

plt.figure(figsize=(10,10))

for i in range(25):

plt.subplot(5,5,i+1)

plt.xticks([])

plt.yticks([])

plt.grid(False)

plt.imshow(train_images[i], cmap=plt.cm.binary)

plt.xlabel(class_names[train_labels[i]])

plt.show()

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

|

# Model design

num_pixels = train_images.shape[1] * train_images.shape[2] #28*28 = 784

X_train = train_images.reshape(train_images.shape[0], num_pixels)

X_test = test_images.reshape(test_images.shape[0], num_pixels)

# normalize inputs from 0-255 to 0-1

X_train = X_train / 255

X_test = X_test / 255

Y_test = test_labels

# one hot encode outputs

y_train = np_utils.to_categorical(train_labels )

y_test = np_utils.to_categorical(test_labels )

hidden_nodes = 128

num_classes = y_test.shape[1]

def baseline_model():

# create model

model = Sequential()

model.add(Dense(num_pixels, input_dim= num_pixels, activation='relu'))

model.add(Dense(hidden_nodes, activation='relu'))

model.add(Dense(num_classes, activation='softmax'))

sgd = optimizers.SGD(lr=0.01)

# Compile model

model.compile(loss='categorical_crossentropy', optimizer=sgd,

metrics=['accuracy'])

return model

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

|

# Train and test

model = baseline_model()

# Fit the model

history = model.fit(

X_train,

y_train,

epochs=80,

batch_size=200

)

# Final evaluation of the model

scores = model.evaluate(X_test, y_test)

print("Accuracy: %.2f%%" % (scores[1]*100))

|

WARNING:tensorflow:From C:\Tools\miniconda3\envs\ANLY535\lib\site-packages\tensorflow\python\framework\op_def_library.py:263: colocate_with (from tensorflow.python.framework.ops) is deprecated and will be removed in a future version.

Instructions for updating:

Colocations handled automatically by placer.

WARNING:tensorflow:From C:\Tools\miniconda3\envs\ANLY535\lib\site-packages\tensorflow\python\ops\math_ops.py:3066: to_int32 (from tensorflow.python.ops.math_ops) is deprecated and will be removed in a future version.

Instructions for updating:

Use tf.cast instead.

Epoch 1/80

60000/60000 [==============================] - 4s 61us/step - loss: 2.2996 - acc: 0.2694

Epoch 2/80

60000/60000 [==============================] - 3s 50us/step - loss: 2.2987 - acc: 0.2799

Epoch 3/80

60000/60000 [==============================] - 3s 48us/step - loss: 2.2984 - acc: 0.3273

Epoch 4/80

60000/60000 [==============================] - 3s 47us/step - loss: 2.2982 - acc: 0.3075

Epoch 5/80

60000/60000 [==============================] - 3s 48us/step - loss: 2.2979 - acc: 0.2849

Epoch 6/80

60000/60000 [==============================] - 3s 49us/step - loss: 2.2976 - acc: 0.3340

Epoch 7/80

60000/60000 [==============================] - 3s 53us/step - loss: 2.2973 - acc: 0.3789

Epoch 8/80

60000/60000 [==============================] - 4s 62us/step - loss: 2.2970 - acc: 0.3198

Epoch 9/80

60000/60000 [==============================] - 3s 58us/step - loss: 2.2968 - acc: 0.3629

Epoch 10/80

60000/60000 [==============================] - 4s 62us/step - loss: 2.2964 - acc: 0.3555

Epoch 11/80

60000/60000 [==============================] - 3s 57us/step - loss: 2.2961 - acc: 0.3422

Epoch 12/80

60000/60000 [==============================] - 3s 56us/step - loss: 2.2958 - acc: 0.3868

Epoch 13/80

60000/60000 [==============================] - 3s 58us/step - loss: 2.2955 - acc: 0.3637

Epoch 14/80

60000/60000 [==============================] - 3s 57us/step - loss: 2.2951 - acc: 0.4177

Epoch 15/80

60000/60000 [==============================] - 3s 56us/step - loss: 2.2947 - acc: 0.3981

Epoch 16/80

60000/60000 [==============================] - 3s 57us/step - loss: 2.2944 - acc: 0.3546

Epoch 17/80

60000/60000 [==============================] - 3s 57us/step - loss: 2.2940 - acc: 0.4396

Epoch 18/80

60000/60000 [==============================] - 3s 57us/step - loss: 2.2936 - acc: 0.4661

Epoch 19/80

60000/60000 [==============================] - 3s 57us/step - loss: 2.2931 - acc: 0.4141

Epoch 20/80

60000/60000 [==============================] - 3s 57us/step - loss: 2.2927 - acc: 0.4457

Epoch 21/80

60000/60000 [==============================] - 3s 57us/step - loss: 2.2922 - acc: 0.4444

Epoch 22/80

60000/60000 [==============================] - 3s 57us/step - loss: 2.2917 - acc: 0.4238

Epoch 23/80

60000/60000 [==============================] - 3s 58us/step - loss: 2.2912 - acc: 0.4688

Epoch 24/80

60000/60000 [==============================] - 3s 58us/step - loss: 2.2907 - acc: 0.4571

Epoch 25/80

60000/60000 [==============================] - 3s 58us/step - loss: 2.2902 - acc: 0.4834

Epoch 26/80

60000/60000 [==============================] - 3s 58us/step - loss: 2.2896 - acc: 0.4782

Epoch 27/80

60000/60000 [==============================] - 4s 59us/step - loss: 2.2890 - acc: 0.4789

Epoch 28/80

60000/60000 [==============================] - 4s 59us/step - loss: 2.2883 - acc: 0.4625

Epoch 29/80

60000/60000 [==============================] - 4s 59us/step - loss: 2.2876 - acc: 0.5180

Epoch 30/80

60000/60000 [==============================] - 4s 59us/step - loss: 2.2869 - acc: 0.4787

Epoch 31/80

60000/60000 [==============================] - 4s 61us/step - loss: 2.2862 - acc: 0.4800

Epoch 32/80

60000/60000 [==============================] - 4s 60us/step - loss: 2.2854 - acc: 0.4896

Epoch 33/80

60000/60000 [==============================] - 4s 60us/step - loss: 2.2845 - acc: 0.4908

Epoch 34/80

60000/60000 [==============================] - 4s 60us/step - loss: 2.2837 - acc: 0.4907

Epoch 35/80

60000/60000 [==============================] - 4s 60us/step - loss: 2.2827 - acc: 0.5088

Epoch 36/80

60000/60000 [==============================] - 4s 60us/step - loss: 2.2817 - acc: 0.4600

Epoch 37/80

60000/60000 [==============================] - 4s 60us/step - loss: 2.2807 - acc: 0.5172

Epoch 38/80

60000/60000 [==============================] - 4s 60us/step - loss: 2.2796 - acc: 0.4870

Epoch 39/80

60000/60000 [==============================] - 4s 61us/step - loss: 2.2784 - acc: 0.5112

Epoch 40/80

60000/60000 [==============================] - 4s 61us/step - loss: 2.2771 - acc: 0.4713

Epoch 41/80

60000/60000 [==============================] - 4s 61us/step - loss: 2.2758 - acc: 0.4901

Epoch 42/80

60000/60000 [==============================] - 4s 61us/step - loss: 2.2743 - acc: 0.4810

Epoch 43/80

60000/60000 [==============================] - 4s 62us/step - loss: 2.2728 - acc: 0.4905

Epoch 44/80

60000/60000 [==============================] - 4s 63us/step - loss: 2.2711 - acc: 0.4800

Epoch 45/80

60000/60000 [==============================] - 4s 62us/step - loss: 2.2694 - acc: 0.4817

Epoch 46/80

60000/60000 [==============================] - 4s 62us/step - loss: 2.2675 - acc: 0.5013

Epoch 47/80

60000/60000 [==============================] - 4s 64us/step - loss: 2.2654 - acc: 0.4616

Epoch 48/80

60000/60000 [==============================] - 4s 63us/step - loss: 2.2632 - acc: 0.4877

Epoch 49/80

60000/60000 [==============================] - 4s 63us/step - loss: 2.2608 - acc: 0.4841

Epoch 50/80

60000/60000 [==============================] - 4s 63us/step - loss: 2.2582 - acc: 0.4572

Epoch 51/80

60000/60000 [==============================] - 4s 66us/step - loss: 2.2554 - acc: 0.4645

Epoch 52/80

60000/60000 [==============================] - 4s 68us/step - loss: 2.2524 - acc: 0.4562

Epoch 53/80

60000/60000 [==============================] - 4s 74us/step - loss: 2.2491 - acc: 0.4568

Epoch 54/80

60000/60000 [==============================] - 4s 72us/step - loss: 2.2455 - acc: 0.4789

Epoch 55/80

60000/60000 [==============================] - 4s 68us/step - loss: 2.2416 - acc: 0.4548

Epoch 56/80

60000/60000 [==============================] - 4s 69us/step - loss: 2.2373 - acc: 0.4511

Epoch 57/80

60000/60000 [==============================] - 5s 84us/step - loss: 2.2327 - acc: 0.4359

Epoch 58/80

60000/60000 [==============================] - 5s 76us/step - loss: 2.2276 - acc: 0.4640

Epoch 59/80

60000/60000 [==============================] - 5s 78us/step - loss: 2.2220 - acc: 0.4459

Epoch 60/80

60000/60000 [==============================] - 4s 74us/step - loss: 2.2159 - acc: 0.4613

Epoch 61/80

60000/60000 [==============================] - 5s 76us/step - loss: 2.2091 - acc: 0.4213

Epoch 62/80

60000/60000 [==============================] - 4s 75us/step - loss: 2.2017 - acc: 0.4394

Epoch 63/80

60000/60000 [==============================] - 4s 72us/step - loss: 2.1936 - acc: 0.4389

Epoch 64/80

60000/60000 [==============================] - 5s 75us/step - loss: 2.1847 - acc: 0.4191

Epoch 65/80

60000/60000 [==============================] - 4s 75us/step - loss: 2.1749 - acc: 0.4372

Epoch 66/80

60000/60000 [==============================] - 4s 74us/step - loss: 2.1641 - acc: 0.4135

Epoch 67/80

60000/60000 [==============================] - 5s 75us/step - loss: 2.1522 - acc: 0.4197

Epoch 68/80

60000/60000 [==============================] - 4s 69us/step - loss: 2.1391 - acc: 0.4246

Epoch 69/80

60000/60000 [==============================] - 4s 69us/step - loss: 2.1250 - acc: 0.4186

Epoch 70/80

60000/60000 [==============================] - 4s 73us/step - loss: 2.1095 - acc: 0.3967

Epoch 71/80

60000/60000 [==============================] - 4s 71us/step - loss: 2.0926 - acc: 0.4274

Epoch 72/80

60000/60000 [==============================] - 4s 74us/step - loss: 2.0745 - acc: 0.4060

Epoch 73/80

60000/60000 [==============================] - 4s 69us/step - loss: 2.0550 - acc: 0.4091

Epoch 74/80

60000/60000 [==============================] - 4s 69us/step - loss: 2.0343 - acc: 0.4186

Epoch 75/80

60000/60000 [==============================] - 4s 71us/step - loss: 2.0125 - acc: 0.4071

Epoch 76/80

60000/60000 [==============================] - 4s 69us/step - loss: 1.9895 - acc: 0.4018

Epoch 77/80

60000/60000 [==============================] - 4s 70us/step - loss: 1.9657 - acc: 0.4049

Epoch 78/80

60000/60000 [==============================] - 4s 69us/step - loss: 1.9414 - acc: 0.4284

Epoch 79/80

60000/60000 [==============================] - 4s 70us/step - loss: 1.9165 - acc: 0.4145

Epoch 80/80

60000/60000 [==============================] - 4s 69us/step - loss: 1.8913 - acc: 0.4164

10000/10000 [==============================] - 1s 72us/step

Accuracy: 41.31%

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

|

# New weight initialization

def model_SDG():

# create model

model = Sequential()

model.add(Dense(

hidden_nodes,

input_dim = num_pixels,

kernel_initializer = initializers.RandomNormal(mean=0.0, stddev=0.05),

activation = 'relu'))

model.add(Dense(

hidden_nodes,

kernel_initializer = initializers.RandomNormal(mean=0.0, stddev=0.125),

activation = 'relu'))

model.add(Dense(

num_classes,

kernel_initializer = initializers.RandomNormal(mean=0.0, stddev=0.32),

activation='softmax'))

sgd = optimizers.SGD(lr=0.01)

# Compile model

model.compile(

loss='categorical_crossentropy',

optimizer=sgd,

metrics=['accuracy']

)

return model

modelSDG = model_SDG()

# Fit the model

historySDG = modelSDG.fit(

X_train,

y_train,

epochs=80,

batch_size=200

)

# Final evaluation of the model

scores = modelSDG.evaluate(X_test, y_test)

print("Accuracy: %.2f%%" % (scores[1]*100))

|

Epoch 1/80

60000/60000 [==============================] - 1s 24us/step - loss: 2.2919 - acc: 0.2038

Epoch 2/80

60000/60000 [==============================] - 1s 21us/step - loss: 2.2895 - acc: 0.2576

Epoch 3/80

60000/60000 [==============================] - 1s 19us/step - loss: 2.2875 - acc: 0.2811

Epoch 4/80

60000/60000 [==============================] - 1s 19us/step - loss: 2.2854 - acc: 0.3086

Epoch 5/80

60000/60000 [==============================] - 1s 19us/step - loss: 2.2831 - acc: 0.2952

Epoch 6/80

60000/60000 [==============================] - 1s 19us/step - loss: 2.2809 - acc: 0.3340

Epoch 7/80

60000/60000 [==============================] - 1s 19us/step - loss: 2.2783 - acc: 0.3531

Epoch 8/80

60000/60000 [==============================] - 1s 19us/step - loss: 2.2757 - acc: 0.3628

Epoch 9/80

60000/60000 [==============================] - 1s 19us/step - loss: 2.2729 - acc: 0.3777

Epoch 10/80

60000/60000 [==============================] - 1s 19us/step - loss: 2.2699 - acc: 0.3756

Epoch 11/80

60000/60000 [==============================] - 1s 19us/step - loss: 2.2668 - acc: 0.3775

Epoch 12/80

60000/60000 [==============================] - 1s 19us/step - loss: 2.2634 - acc: 0.4147

Epoch 13/80

60000/60000 [==============================] - 1s 19us/step - loss: 2.2597 - acc: 0.4023

Epoch 14/80

60000/60000 [==============================] - 1s 20us/step - loss: 2.2558 - acc: 0.3984

Epoch 15/80

60000/60000 [==============================] - 1s 19us/step - loss: 2.2515 - acc: 0.4087

Epoch 16/80

60000/60000 [==============================] - 1s 19us/step - loss: 2.2469 - acc: 0.3909

Epoch 17/80

60000/60000 [==============================] - 1s 19us/step - loss: 2.2418 - acc: 0.3966

Epoch 18/80

60000/60000 [==============================] - 1s 19us/step - loss: 2.2364 - acc: 0.4116

Epoch 19/80

60000/60000 [==============================] - 1s 20us/step - loss: 2.2305 - acc: 0.4110

Epoch 20/80

60000/60000 [==============================] - 1s 19us/step - loss: 2.2240 - acc: 0.4003

Epoch 21/80

60000/60000 [==============================] - 1s 19us/step - loss: 2.2171 - acc: 0.4063

Epoch 22/80

60000/60000 [==============================] - 1s 19us/step - loss: 2.2094 - acc: 0.4021

Epoch 23/80

60000/60000 [==============================] - 1s 19us/step - loss: 2.2010 - acc: 0.4203

Epoch 24/80

60000/60000 [==============================] - 1s 19us/step - loss: 2.1919 - acc: 0.4218

Epoch 25/80

60000/60000 [==============================] - 1s 20us/step - loss: 2.1819 - acc: 0.4111

Epoch 26/80

60000/60000 [==============================] - 1s 19us/step - loss: 2.1709 - acc: 0.4056

Epoch 27/80

60000/60000 [==============================] - 1s 19us/step - loss: 2.1590 - acc: 0.4063

Epoch 28/80

60000/60000 [==============================] - 1s 20us/step - loss: 2.1459 - acc: 0.4003

Epoch 29/80

60000/60000 [==============================] - 1s 20us/step - loss: 2.1316 - acc: 0.3912

Epoch 30/80

60000/60000 [==============================] - 1s 20us/step - loss: 2.1163 - acc: 0.4214

Epoch 31/80

60000/60000 [==============================] - 1s 19us/step - loss: 2.0993 - acc: 0.4191

Epoch 32/80

60000/60000 [==============================] - 1s 20us/step - loss: 2.0812 - acc: 0.4275

Epoch 33/80

60000/60000 [==============================] - 1s 21us/step - loss: 2.0617 - acc: 0.4323

Epoch 34/80

60000/60000 [==============================] - 1s 20us/step - loss: 2.0410 - acc: 0.4250

Epoch 35/80

60000/60000 [==============================] - 1s 20us/step - loss: 2.0188 - acc: 0.4345

Epoch 36/80

60000/60000 [==============================] - 1s 20us/step - loss: 1.9953 - acc: 0.4249

Epoch 37/80

60000/60000 [==============================] - 1s 20us/step - loss: 1.9709 - acc: 0.4439

Epoch 38/80

60000/60000 [==============================] - 1s 20us/step - loss: 1.9456 - acc: 0.4431

Epoch 39/80

60000/60000 [==============================] - 1s 19us/step - loss: 1.9194 - acc: 0.4649

Epoch 40/80

60000/60000 [==============================] - 1s 20us/step - loss: 1.8926 - acc: 0.4708

Epoch 41/80

60000/60000 [==============================] - 1s 20us/step - loss: 1.8654 - acc: 0.4773

Epoch 42/80

60000/60000 [==============================] - 1s 20us/step - loss: 1.8380 - acc: 0.4872

Epoch 43/80

60000/60000 [==============================] - 1s 20us/step - loss: 1.8109 - acc: 0.4912

Epoch 44/80

60000/60000 [==============================] - 1s 20us/step - loss: 1.7833 - acc: 0.4876

Epoch 45/80

60000/60000 [==============================] - 1s 20us/step - loss: 1.7564 - acc: 0.4993

Epoch 46/80

60000/60000 [==============================] - 1s 20us/step - loss: 1.7296 - acc: 0.5092

Epoch 47/80

60000/60000 [==============================] - 1s 20us/step - loss: 1.7036 - acc: 0.5131

Epoch 48/80

60000/60000 [==============================] - 1s 20us/step - loss: 1.6777 - acc: 0.5213

Epoch 49/80

60000/60000 [==============================] - 1s 20us/step - loss: 1.6525 - acc: 0.5200

Epoch 50/80

60000/60000 [==============================] - 1s 20us/step - loss: 1.6278 - acc: 0.5247

Epoch 51/80

60000/60000 [==============================] - 1s 20us/step - loss: 1.6036 - acc: 0.5254

Epoch 52/80

60000/60000 [==============================] - 1s 21us/step - loss: 1.5803 - acc: 0.5316

Epoch 53/80

60000/60000 [==============================] - 1s 20us/step - loss: 1.5578 - acc: 0.5352

Epoch 54/80

60000/60000 [==============================] - 1s 20us/step - loss: 1.5355 - acc: 0.5341

Epoch 55/80

60000/60000 [==============================] - 1s 20us/step - loss: 1.5141 - acc: 0.5394

Epoch 56/80

60000/60000 [==============================] - 1s 20us/step - loss: 1.4931 - acc: 0.5392

Epoch 57/80

60000/60000 [==============================] - 1s 20us/step - loss: 1.4732 - acc: 0.5447

Epoch 58/80

60000/60000 [==============================] - 1s 20us/step - loss: 1.4540 - acc: 0.5472

Epoch 59/80

60000/60000 [==============================] - 1s 21us/step - loss: 1.4353 - acc: 0.5483

Epoch 60/80

60000/60000 [==============================] - 1s 21us/step - loss: 1.4172 - acc: 0.5517

Epoch 61/80

60000/60000 [==============================] - 1s 22us/step - loss: 1.4001 - acc: 0.5551

Epoch 62/80

60000/60000 [==============================] - 1s 21us/step - loss: 1.3837 - acc: 0.5576

Epoch 63/80

60000/60000 [==============================] - 1s 21us/step - loss: 1.3679 - acc: 0.5599

Epoch 64/80

60000/60000 [==============================] - 1s 21us/step - loss: 1.3526 - acc: 0.5603

Epoch 65/80

60000/60000 [==============================] - 1s 20us/step - loss: 1.3383 - acc: 0.5609

Epoch 66/80

60000/60000 [==============================] - 1s 21us/step - loss: 1.3244 - acc: 0.5664

Epoch 67/80

60000/60000 [==============================] - 1s 20us/step - loss: 1.3107 - acc: 0.5702

Epoch 68/80

60000/60000 [==============================] - 1s 20us/step - loss: 1.2978 - acc: 0.5706

Epoch 69/80

60000/60000 [==============================] - 1s 21us/step - loss: 1.2855 - acc: 0.5744

Epoch 70/80

60000/60000 [==============================] - 1s 20us/step - loss: 1.2735 - acc: 0.5739

Epoch 71/80

60000/60000 [==============================] - 1s 21us/step - loss: 1.2623 - acc: 0.5766

Epoch 72/80

60000/60000 [==============================] - 1s 21us/step - loss: 1.2513 - acc: 0.5772

Epoch 73/80

60000/60000 [==============================] - 1s 21us/step - loss: 1.2410 - acc: 0.5817

Epoch 74/80

60000/60000 [==============================] - 1s 21us/step - loss: 1.2309 - acc: 0.5818

Epoch 75/80

60000/60000 [==============================] - 1s 21us/step - loss: 1.2209 - acc: 0.5846

Epoch 76/80

60000/60000 [==============================] - 1s 20us/step - loss: 1.2115 - acc: 0.5866

Epoch 77/80

60000/60000 [==============================] - 1s 20us/step - loss: 1.2018 - acc: 0.5893

Epoch 78/80

60000/60000 [==============================] - 1s 21us/step - loss: 1.1935 - acc: 0.5921

Epoch 79/80

60000/60000 [==============================] - 1s 21us/step - loss: 1.1847 - acc: 0.5919

Epoch 80/80

60000/60000 [==============================] - 1s 21us/step - loss: 1.1758 - acc: 0.5956

10000/10000 [==============================] - 0s 27us/step

Accuracy: 57.74%

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

|

# New weight initialization

def model_ADAM():

# create model

model = Sequential()

model.add(Dense(

hidden_nodes,

input_dim = num_pixels,

kernel_initializer = initializers.RandomNormal(mean=0.0, stddev=0.05),

activation = 'relu'))

model.add(Dense(

hidden_nodes,

kernel_initializer = initializers.RandomNormal(mean=0.0, stddev=0.125),

activation = 'relu'))

model.add(Dense(

num_classes,

kernel_initializer = initializers.RandomNormal(mean=0.0, stddev=0.32),

activation='softmax'))

# Compile model

model.compile(

loss='categorical_crossentropy',

optimizer='adam',

metrics=['accuracy']

)

return model

modelADAM = model_ADAM()

# Fit the model

historyADAM = modelADAM.fit(

X_train,

y_train,

epochs=80,

batch_size=200

)

# Final evaluation of the model

scores = modelADAM.evaluate(X_test, y_test)

print("Accuracy: %.2f%%" % (scores[1]*100))

|

Epoch 1/80

60000/60000 [==============================] - 1s 25us/step - loss: 1.1629 - acc: 0.6260

Epoch 2/80

60000/60000 [==============================] - 1s 22us/step - loss: 0.6292 - acc: 0.7675

Epoch 3/80

60000/60000 [==============================] - 1s 22us/step - loss: 0.5534 - acc: 0.8001

Epoch 4/80

60000/60000 [==============================] - 1s 22us/step - loss: 0.5117 - acc: 0.8165

Epoch 5/80

60000/60000 [==============================] - 1s 22us/step - loss: 0.4831 - acc: 0.8287

Epoch 6/80

60000/60000 [==============================] - 1s 21us/step - loss: 0.4608 - acc: 0.8367

Epoch 7/80

60000/60000 [==============================] - 1s 21us/step - loss: 0.4444 - acc: 0.8418

Epoch 8/80

60000/60000 [==============================] - 1s 21us/step - loss: 0.4315 - acc: 0.8467

Epoch 9/80

60000/60000 [==============================] - 1s 22us/step - loss: 0.4203 - acc: 0.8509

Epoch 10/80

60000/60000 [==============================] - 1s 22us/step - loss: 0.4112 - acc: 0.8547

Epoch 11/80

60000/60000 [==============================] - 1s 21us/step - loss: 0.4043 - acc: 0.8556

Epoch 12/80

60000/60000 [==============================] - 1s 21us/step - loss: 0.3952 - acc: 0.8591

Epoch 13/80

60000/60000 [==============================] - 1s 21us/step - loss: 0.3900 - acc: 0.8601

Epoch 14/80

60000/60000 [==============================] - 1s 21us/step - loss: 0.3838 - acc: 0.8621

Epoch 15/80

60000/60000 [==============================] - 1s 21us/step - loss: 0.3795 - acc: 0.8645

Epoch 16/80

60000/60000 [==============================] - 1s 21us/step - loss: 0.3738 - acc: 0.8661

Epoch 17/80

60000/60000 [==============================] - 1s 22us/step - loss: 0.3698 - acc: 0.8669

Epoch 18/80

60000/60000 [==============================] - 1s 21us/step - loss: 0.3654 - acc: 0.8691

Epoch 19/80

60000/60000 [==============================] - 1s 22us/step - loss: 0.3607 - acc: 0.8711

Epoch 20/80

60000/60000 [==============================] - 1s 22us/step - loss: 0.3558 - acc: 0.8726

Epoch 21/80

60000/60000 [==============================] - 1s 22us/step - loss: 0.3528 - acc: 0.8734

Epoch 22/80

60000/60000 [==============================] - 1s 23us/step - loss: 0.3492 - acc: 0.8745

Epoch 23/80

60000/60000 [==============================] - 2s 27us/step - loss: 0.3456 - acc: 0.8752

Epoch 24/80

60000/60000 [==============================] - 2s 27us/step - loss: 0.3416 - acc: 0.8771

Epoch 25/80

60000/60000 [==============================] - 2s 27us/step - loss: 0.3398 - acc: 0.8784

Epoch 26/80

60000/60000 [==============================] - 2s 28us/step - loss: 0.3363 - acc: 0.8789

Epoch 27/80

60000/60000 [==============================] - 2s 27us/step - loss: 0.3315 - acc: 0.8798

Epoch 28/80

60000/60000 [==============================] - 2s 27us/step - loss: 0.3293 - acc: 0.8814

Epoch 29/80

60000/60000 [==============================] - 2s 27us/step - loss: 0.3261 - acc: 0.8831

Epoch 30/80

60000/60000 [==============================] - 2s 27us/step - loss: 0.3237 - acc: 0.8835

Epoch 31/80

60000/60000 [==============================] - 2s 27us/step - loss: 0.3212 - acc: 0.8833

Epoch 32/80

60000/60000 [==============================] - 2s 27us/step - loss: 0.3173 - acc: 0.8859

Epoch 33/80

60000/60000 [==============================] - 2s 28us/step - loss: 0.3151 - acc: 0.8859

Epoch 34/80

60000/60000 [==============================] - 2s 28us/step - loss: 0.3116 - acc: 0.8872

Epoch 35/80

60000/60000 [==============================] - 2s 28us/step - loss: 0.3098 - acc: 0.8873

Epoch 36/80

60000/60000 [==============================] - 2s 27us/step - loss: 0.3085 - acc: 0.8884

Epoch 37/80

60000/60000 [==============================] - 2s 27us/step - loss: 0.3048 - acc: 0.8892

Epoch 38/80

60000/60000 [==============================] - 2s 28us/step - loss: 0.3021 - acc: 0.8902

Epoch 39/80

60000/60000 [==============================] - 2s 27us/step - loss: 0.3001 - acc: 0.8914

Epoch 40/80

60000/60000 [==============================] - 2s 27us/step - loss: 0.2979 - acc: 0.8920

Epoch 41/80

60000/60000 [==============================] - 2s 28us/step - loss: 0.2946 - acc: 0.8929

Epoch 42/80

60000/60000 [==============================] - 2s 27us/step - loss: 0.2923 - acc: 0.8929

Epoch 43/80

60000/60000 [==============================] - 2s 28us/step - loss: 0.2903 - acc: 0.8942

Epoch 44/80

60000/60000 [==============================] - 2s 28us/step - loss: 0.2879 - acc: 0.8954

Epoch 45/80

60000/60000 [==============================] - 2s 27us/step - loss: 0.2849 - acc: 0.8955

Epoch 46/80

60000/60000 [==============================] - 2s 29us/step - loss: 0.2829 - acc: 0.8966

Epoch 47/80

60000/60000 [==============================] - 2s 27us/step - loss: 0.2821 - acc: 0.8976

Epoch 48/80

60000/60000 [==============================] - 2s 28us/step - loss: 0.2801 - acc: 0.8976

Epoch 49/80

60000/60000 [==============================] - 2s 28us/step - loss: 0.2791 - acc: 0.8978

Epoch 50/80

60000/60000 [==============================] - 2s 27us/step - loss: 0.2757 - acc: 0.8993

Epoch 51/80

60000/60000 [==============================] - 2s 28us/step - loss: 0.2734 - acc: 0.8998

Epoch 52/80

60000/60000 [==============================] - 2s 28us/step - loss: 0.2718 - acc: 0.9013

Epoch 53/80

60000/60000 [==============================] - 2s 29us/step - loss: 0.2688 - acc: 0.9020

Epoch 54/80

60000/60000 [==============================] - 2s 28us/step - loss: 0.2682 - acc: 0.9022

Epoch 55/80

60000/60000 [==============================] - 2s 28us/step - loss: 0.2648 - acc: 0.9033

Epoch 56/80

60000/60000 [==============================] - 2s 27us/step - loss: 0.2647 - acc: 0.9032

Epoch 57/80

60000/60000 [==============================] - 2s 28us/step - loss: 0.2613 - acc: 0.9051

Epoch 58/80

60000/60000 [==============================] - 2s 28us/step - loss: 0.2612 - acc: 0.9052

Epoch 59/80

60000/60000 [==============================] - 2s 28us/step - loss: 0.2583 - acc: 0.9046

Epoch 60/80

60000/60000 [==============================] - 2s 28us/step - loss: 0.2570 - acc: 0.9062

Epoch 61/80

60000/60000 [==============================] - 2s 28us/step - loss: 0.2547 - acc: 0.9070

Epoch 62/80

60000/60000 [==============================] - 2s 28us/step - loss: 0.2539 - acc: 0.9074

Epoch 63/80

60000/60000 [==============================] - 2s 28us/step - loss: 0.2531 - acc: 0.9073

Epoch 64/80

60000/60000 [==============================] - 2s 29us/step - loss: 0.2487 - acc: 0.9097

Epoch 65/80

60000/60000 [==============================] - 2s 29us/step - loss: 0.2484 - acc: 0.9100

Epoch 66/80

60000/60000 [==============================] - 2s 28us/step - loss: 0.2470 - acc: 0.9099

Epoch 67/80

60000/60000 [==============================] - 2s 30us/step - loss: 0.2443 - acc: 0.9105

Epoch 68/80

60000/60000 [==============================] - 2s 31us/step - loss: 0.2463 - acc: 0.9101

Epoch 69/80

60000/60000 [==============================] - 2s 29us/step - loss: 0.2414 - acc: 0.9112

Epoch 70/80

60000/60000 [==============================] - 2s 28us/step - loss: 0.2399 - acc: 0.9131

Epoch 71/80

60000/60000 [==============================] - 2s 30us/step - loss: 0.2394 - acc: 0.9125

Epoch 72/80

60000/60000 [==============================] - 2s 29us/step - loss: 0.2366 - acc: 0.9138

Epoch 73/80

60000/60000 [==============================] - 2s 30us/step - loss: 0.2359 - acc: 0.9145

Epoch 74/80

60000/60000 [==============================] - 2s 28us/step - loss: 0.2348 - acc: 0.9146

Epoch 75/80

60000/60000 [==============================] - 2s 28us/step - loss: 0.2326 - acc: 0.9156

Epoch 76/80

60000/60000 [==============================] - 2s 29us/step - loss: 0.2327 - acc: 0.9152

Epoch 77/80

60000/60000 [==============================] - 2s 28us/step - loss: 0.2314 - acc: 0.9157

Epoch 78/80

60000/60000 [==============================] - 2s 30us/step - loss: 0.2283 - acc: 0.9172

Epoch 79/80

60000/60000 [==============================] - 2s 29us/step - loss: 0.2270 - acc: 0.9178

Epoch 80/80

60000/60000 [==============================] - 2s 29us/step - loss: 0.2271 - acc: 0.9168

10000/10000 [==============================] - 0s 30us/step

Accuracy: 87.89%

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

|

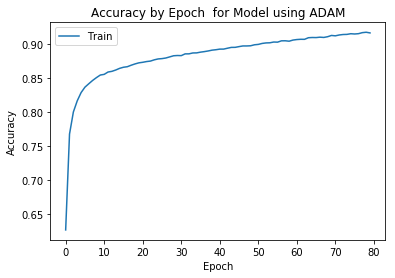

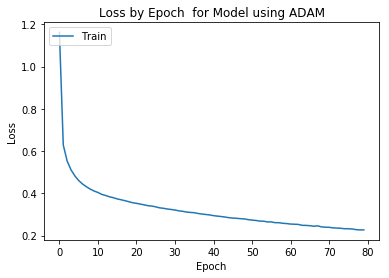

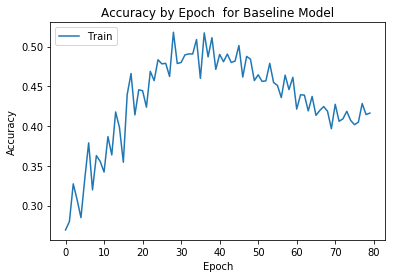

# Histories

def plot_history(history, model_name):

# Plot training & validation accuracy values

plt.plot(history.history['acc'])

plt.title('Accuracy by Epoch ' + ' for ' + model_name)

plt.ylabel('Accuracy')

plt.xlabel('Epoch')

plt.legend(['Train', 'Test'], loc='upper left')

plt.show()

# Plot training & validation loss values

plt.plot(history.history['loss'])

plt.title('Loss by Epoch ' + ' for ' + model_name)

plt.ylabel('Loss')

plt.xlabel('Epoch')

plt.legend(['Train', 'Test'], loc='upper left')

plt.show()

|

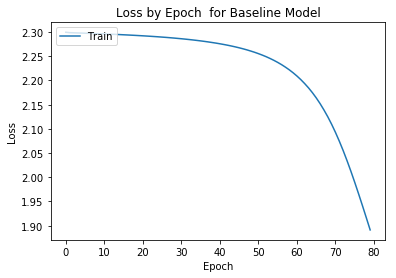

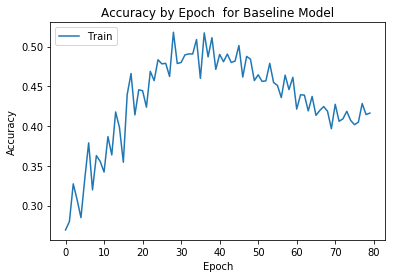

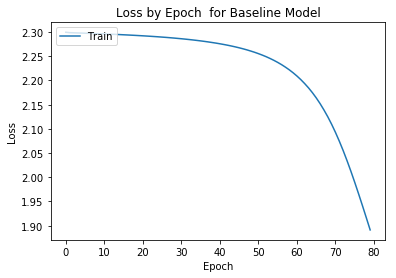

History of Baseline Model #

1

|

plot_history(history, "Baseline Model")

|

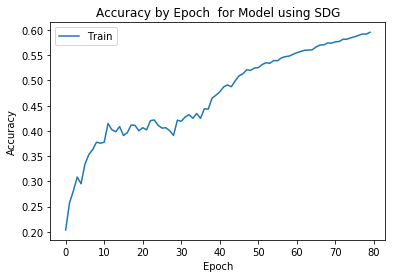

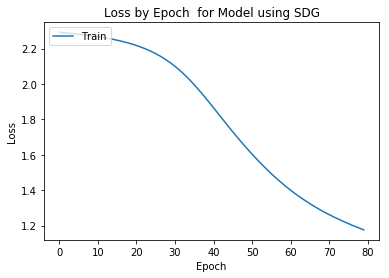

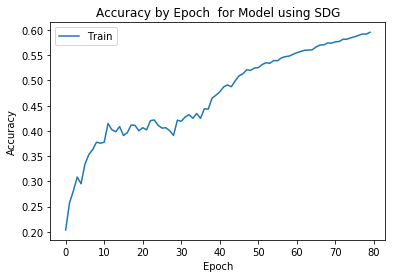

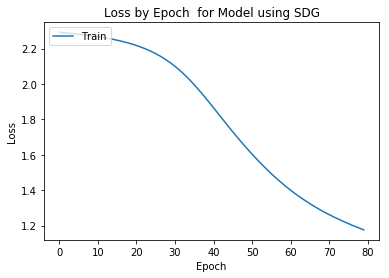

History of Model using SDG #

1

|

plot_history(historySDG, "Model using SDG")

|

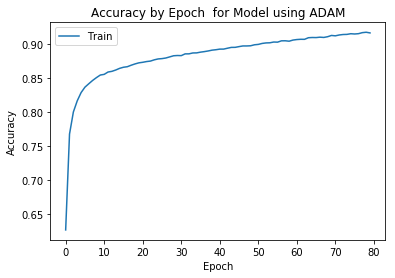

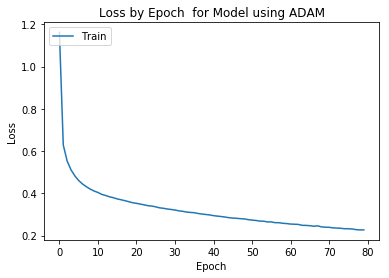

History of Model using ADAM #

1

|

plot_history(historyADAM, "Model using ADAM")

|